“The secrets to the next great scientific breakthrough, industry-changing innovation, or life-altering technology hide in plain sight behind the mountains of data we create every day,” said Meg Whitman, CEO, Hewlett Packard Enterprise.

“To realize this promise, we can’t rely on the technologies of the past, we need a computer built for the Big Data era.”

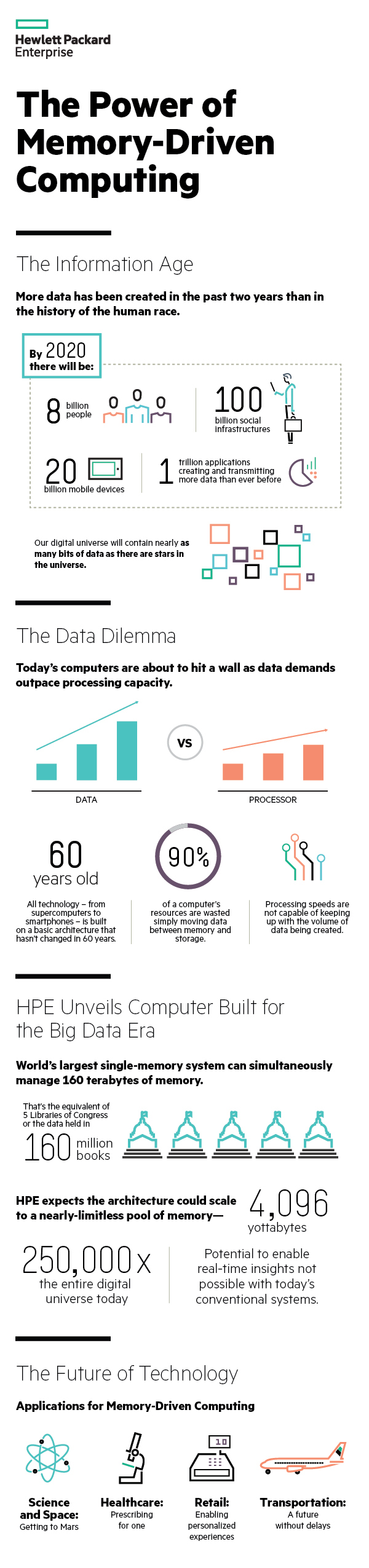

The company unveiled a prototype that contains 160 terabytes (TB) of memory, which is capable of simultaneously working with huge amounts of data. HPE states that there has been no system till date that has been able to hold and manipulate whole data sets of this size in a single-memory system, and this is just a glimpse of what the machine can do.

What can it do?

HPE expects the architecture could easily scale to an exabyte-scale single-memory system and, beyond that, to a nearly-limitless pool of memory – 4,096 yottabytes (250,000 times the entire digital universe today).

According to the company with that amount of memory, it will be possible to simultaneously work with every digital health record of every person on earth; every piece of data from Facebook; every trip of Google’s autonomous vehicles; and every data set from space exploration all at the same time.

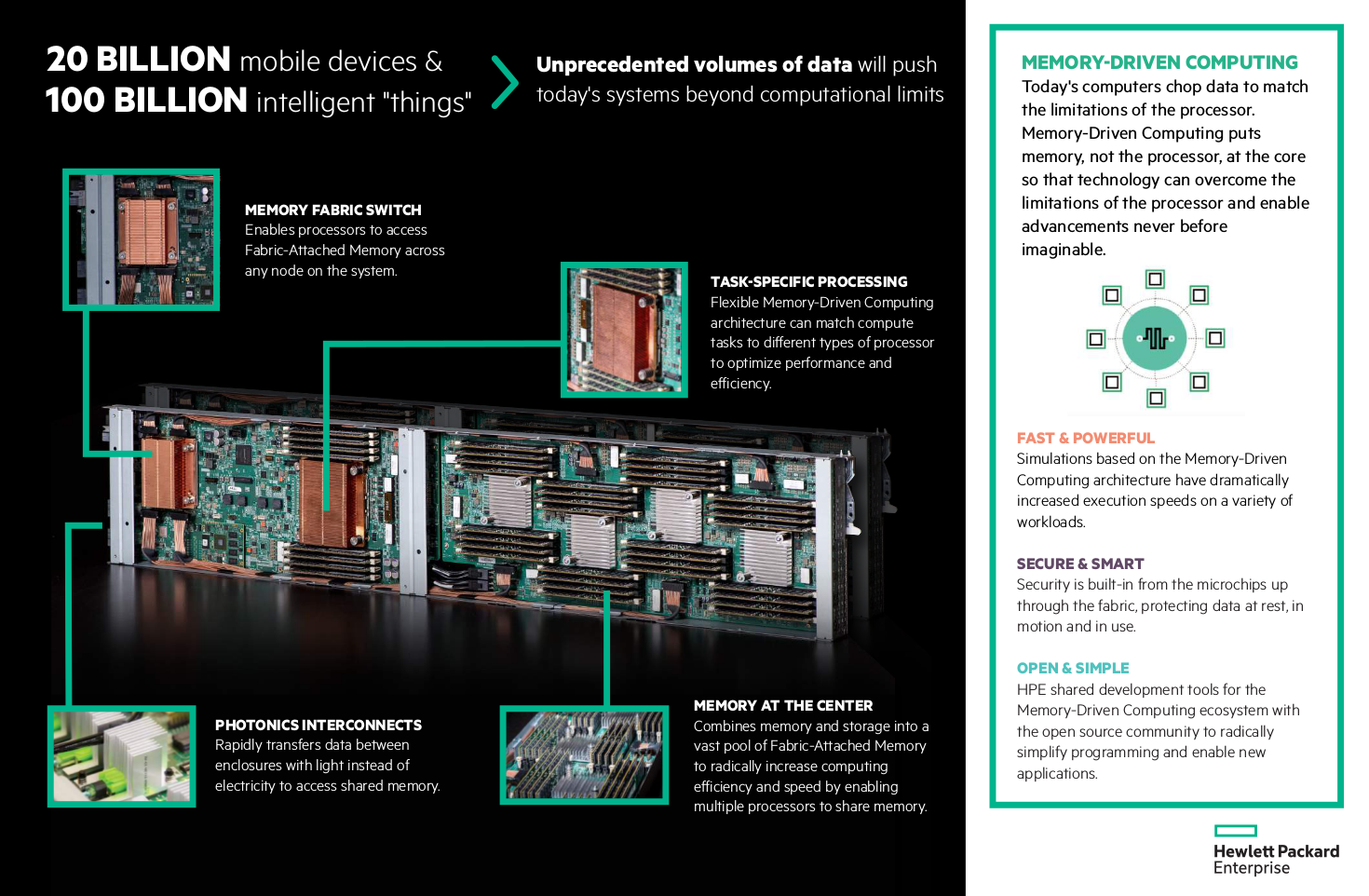

Memory-Driven Computing puts memory, not the processor, at the center of the computing architecture. It eliminates the inefficiencies of how memory, storage and processors interact in traditional systems today. It claims to reduce the time needed to process complex problems to deliver real-time intelligence.

“We believe Memory-Driven Computing is the solution to move the technology industry forward in a way that can enable advancements across all aspects of society,” said Mark Potter, CTO, HPE and Director, Hewlett Packard Labs.

“The architecture we have unveiled can be applied to every computing category – from intelligent edge devices to supercomputers.”

What’s under the hood?

The new prototype builds on the achievements of The Machine research program, including a 160 TB of shared memory spread across 40 physical nodes, interconnected using a high-performance fabric protocol. It has an optimized Linux-based operating system (OS) running on ThunderX2, Cavium’s flagship second generation dual socket capable ARMv8-A workload optimized System on a Chip. It houses photonics/optical communication links, including the new X1 photonics module, are online and operational; and software programming tools designed to take advantage of abundant persistent memory.